Summary

Call center quality assurance (QA) transforms customer interactions by systematically monitoring, evaluating, and improving agent performance. This guide covers the fundamentals of call quality, explains why QA matters for business success, and provides 11 actionable best practices to continuously enhance your quality assurance program. Modern QA combines human expertise with AI-powered tools to analyze 100% of interactions, deliver real-time coaching, and drive measurable improvements in customer satisfaction and operational efficiency.

A. What is call center quality assurance – and why does it matter?

Call center quality assurance is the systematic process of monitoring and evaluating agent-customer interactions against defined performance and compliance standards and then using that data to coach agents, reduce variation, and improve outcomes at scale.

The business case is well-established: U.S. companies lose an estimated $62 billion annually due to poor customer experiences.

Yet most call centers still monitor just 1 to 3% of calls manually, leaving the other 97% completely unscored. A well-designed QA program, especially one powered by AI analysis, can review 100% of interactions, cut QA costs by over 50%, and deliver FCR improvements that translate to approximately $286,000 in annual savings per 1% improvement for a mid-sized operation.

In this guide, you will find: a plain-English definition of call center QA, a 6-step process for building your own program, a QA scorecard template you can use starting today, the key KPIs to track, and a review of how AI is transforming QA coverage in 2026.

As Tushar Jain, Enthu.AI’s co-founder explains:

“A well-run QA program is like having a mirror that reflects the best and worst moments in a call center, helping it improve fast.”

Upload Call & Get Insights

DOWNLOAD DUMMY FILE

DOWNLOAD DUMMY FILE B. How do you build a call center quality assurance program? (6 Steps)

Step 1: Define what a good call looks like

Most QA programs fail before they start because nobody has written down what they’re actually measuring.

Before you score a single call, get your team to agree on specifics: how agents should open calls, what phrases are off-limits, which compliance disclosures are required and in what order, and what the expected resolution timeline looks like.

These don’t need to be lengthy; a one-page reference document is fine. The point is that two different analysts listening to the same call should reach the same conclusion about whether it was handled well.

Step 2: Pick metrics that match your actual problem

Five to eight metrics is about right for most teams. More than that and your scorecards become noise.

The ones you choose should reflect whatever is genuinely hurting you right now.

If you’re losing customers after first contact, FCR and escalation rate matter most. If you’ve had compliance incidents, adherence rate and disclosure completion take priority. If agent attrition is the problem, tone and workload indicators are worth tracking.

Don’t copy someone else’s KPI list; build yours around what your data is already telling you.

Step 3: Build a scorecard with observable criteria

A QA scorecard only works if every criterion describes something a reviewer can actually hear and verify on a call.

“Agent stated the customer’s name at least once during the call” is a criterion. “Agent was not professional” is a judgement and not a criteria.

For each item, define what a full score looks like, what a partial score looks like, and what earns a zero.

Don’t go with too many scoring options, that brings subjectivity in the criteria. (e.g. a rating scale of 1 to 5 now has varied options with no one really understanding what separates a 4 from a 5).

A simple Yes/NO/NA is a good point of start.

Step 4: Calibrate before you start

Once you have a scorecard, run it past the same call with three different quality analysts/TLs/supervisors before you go live.

You’ll almost certainly find they score it differently, and that gap is your calibration problem to fix.

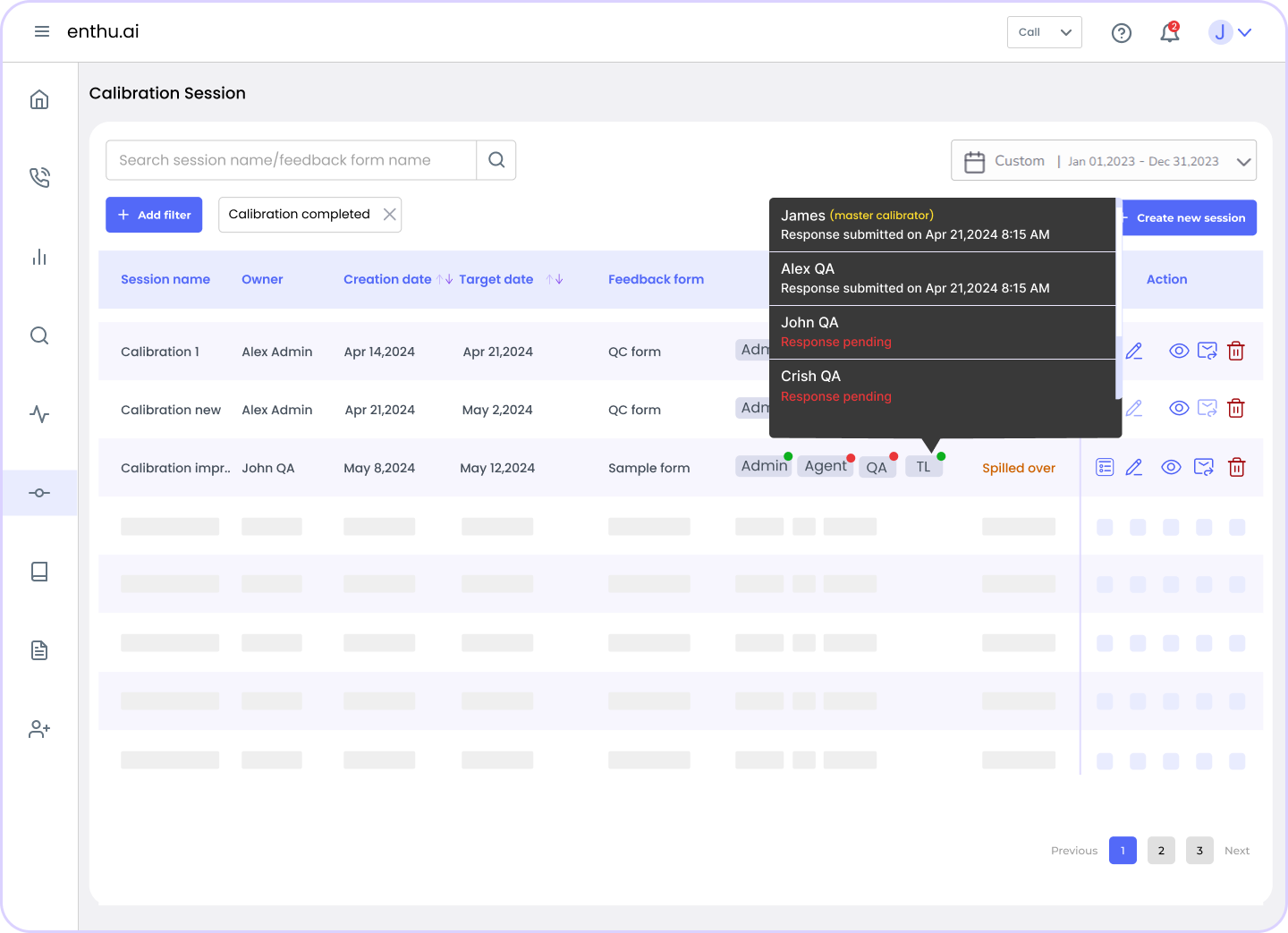

Monthly calibration sessions, where the team scores the same recording independently and then compares, are what keep your QA program honest.

Without them, you end up measuring reviewer preferences rather than agent behavior, which makes the coaching conversations that follow almost impossible to defend.

Step 5: Close the loop

A QA score sitting in a spreadsheet helps nobody.

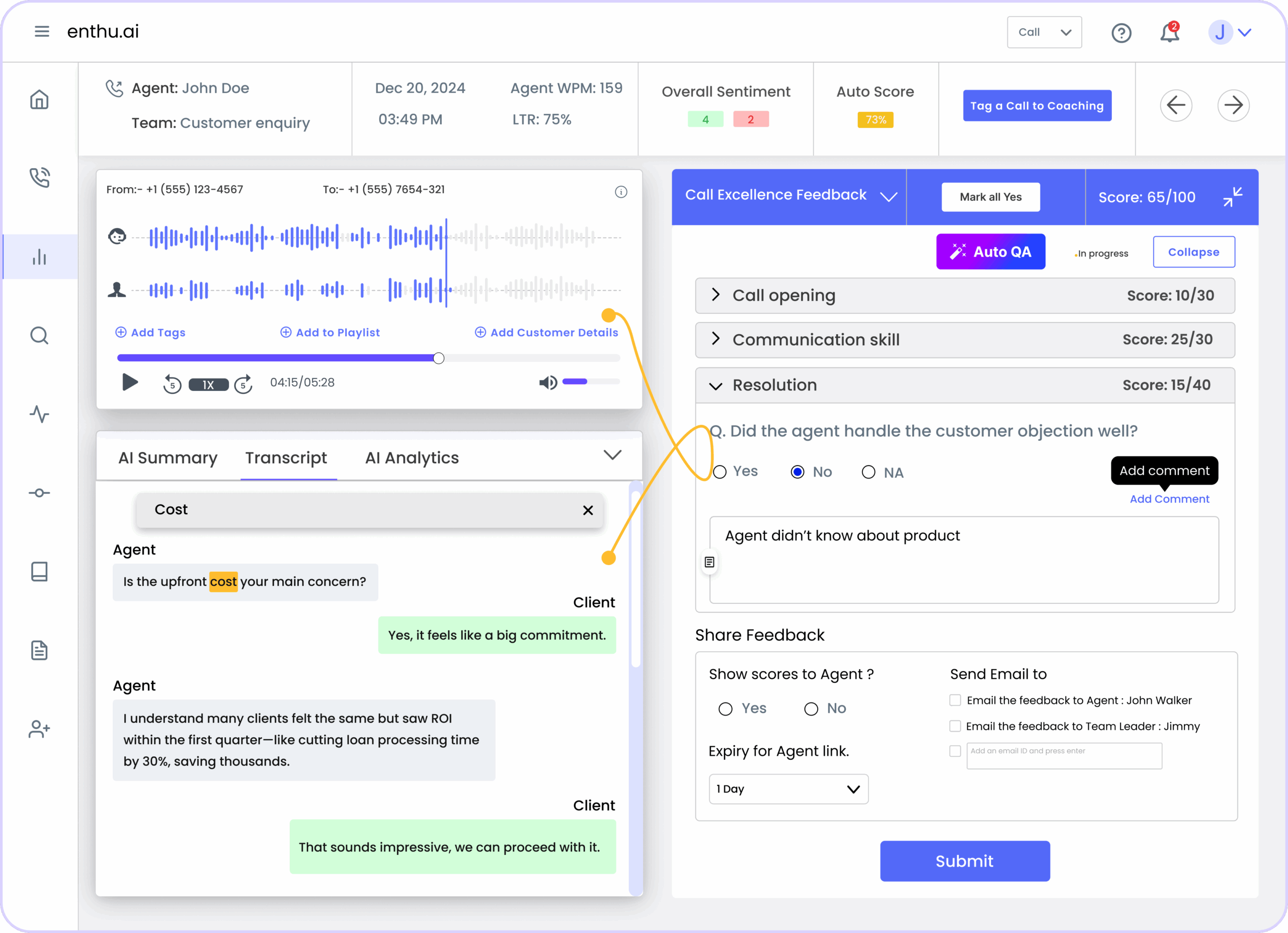

The evaluation needs to reach the agent with enough context to be useful: the specific score, the call clip or timestamp, a clear explanation of where the gap was, and an agreed next step.

Vague feedback like “work on your tone” produces nothing.

Instead, “At the two-minute mark, when the customer mentioned the billing issue, you moved to troubleshooting before acknowledging their frustration: here’s what that should sound like” is something an agent can act on.

Step 6: Fix the system, not just the agent

If the same issue keeps showing up across multiple agents, the problem probably isn’t individual performance, it’s a gap in training, a script that’s unclear, or a process that’s setting people up to fail.

Weekly or monthly reports broken out by team, channel, and issue type will surface these patterns faster than reviewing individual calls.

When you find them, update the materials, not just the agents.

C. What KPIs should a call center QA program track?

My honest answer: fewer than most teams think.

Most QA programs start by tracking everything measurable. The result is reports nobody reads and feedback sessions where agents have no idea what to actually improve.

The right call center quality metrics depend on what your team is there to do, whether it’s sales floor or a support team.

I. Suggested KPIs for a sales contact center

1. Conversion rate (important)

The percentage of calls that result in a completed sale or qualified next step.

Define this precisely before you start tracking it: does a transfer to a closing team count? Does a scheduled callback count? Vague definitions lead to inflated numbers and useless coaching conversations.

2. Talk-to-Listen Ratio (very important)

High-performing sales agents typically talk around 43% of the call and listen for the rest.

Agents who talk more than 60% of the time tend to skip discovery and pitch too early. Most speech analytics platforms like Enthu.AI pull this automatically: it’s one of the easiest signals to act on.

3. Objection handling score (extremely important)

This one doesn’t exist in service programs.

Your QA program should score how the agent responded when a customer pushed back – did they acknowledge the concern and stay on track, or did they stumble and lose the thread?

Define your three or four most common objections and score each one consistently.

4. Compliance adherence rate (extremely important)

If you’re selling financial products, insurance, or anything regulated, this is non-negotiable.

A missed disclosure can void a contract. Weight it heavily in your scorecard regardless of what else you measure.

Also, this section should have zero tolerance. An agent misses one component and they get a zero score, irresepctive of how they have done in the other parts of the call.

5. Process adherance (important)

Most sales organizations have a defined process – a sequence of steps every phone agent is expected to follow from the moment a lead comes in to the moment a customer closes or goes cold.

QA is one of the few places where someone is actually checking whether that process is being followed.

This covers what was talked about in the call to what happens after the call.

Did the agent informed the customer of uploading a document?Was the waiting period clearly defined? Did the agent update the CRM with accurate notes before ending their session? Did the follow-up email go out within the agreed window? Was the next step logged with a realistic date, or left blank? Was the lead correctly categorized so the right person picks it up next?

The sales process exists because the org has figured out, usually through trial and error, what sequence of actions produces the best close rates. And when people skip steps, you lose the ability to diagnose why new business is falling through.

II. Suggested KPIs for a support/service contact center

1. First call resolution (FCR) (extremely important)

FCR measures whether the customer’s issue was resolved without a follow-up contact. This also defines the “wastage” generated in a service contact center; the number of queries that go unresolved and now need another agent’s time to resolve.

Industry average is 70 – 79%; best-in-class operations clear 85%.

A 1% improvement in FCR translates to roughly $286,000 in annual savings for a mid-sized team (SQM Group, 2024), mostly from reduced repeat contacts.

One caveat: agents who close calls quickly don’t always resolve them. Define your measurement window clearly (24 hours, 48 hours, 7 days) and decide upfront how transfers are counted.

2. Customer effort score (CES) (important)

This metric predicts how easy it was for the customer to get their issue resolved.

CES often predicts churn and repeat contacts better than CSAT does. Customers who found the interaction high-effort are significantly more likely to leave, even when the issue was technically resolved.

3. Knowledge accuracy rate (extremely important)

In support, giving a customer wrong information is often worse than not resolving the issue at all.

If QA reviewers are catching factual errors in how agents describe products, policies, or processes, that’s a scope of improvement.

4. Compliance adherence rate (extremely important)

Still applies in regulated industries – healthcare, financial services, collections.

Keep it weighted high enough that a compliance failure can’t be averaged away by a strong score on everything else.

Better, make it zero tolerant.

5. Average Handle Time (AHT) (important)

AHT is useful as context, not a target.

Teams that optimize too hard for low AHT produce agents who rush customers and skip steps. That creates more calls, not fewer.

An agent with slightly longer AHT and a 92 quality score is usually more valuable than one with a fast call and a 74.

III. If your team does both Sales and Support?

Build two separate scoring tracks – one for sales calls, one for service calls – and tag each evaluated call accordingly.

The compliance section can overlap. Everything else should be distinct.

One scorecard that tries to serve both purposes usually ends up serving neither. Sales agents get scored on metrics that don’t reflect their job, and service agents feel the same way.

The extra setup is worth it.

IV. What not to track in your QA program?

NPS at the agent level

Net Promoter Score reflects a customer’s cumulative experience with your brand, not a single call.

Using it to evaluate individual agent performance produces conclusions that are hard to defend and even harder to coach from.

Silence time and talk time as standalone metrics

Fine as diagnostic signals when you’re investigating something specific.

Not scorecard criteria. An agent pausing to find the right answer is doing the right thing.

D. How should a call center QA scorecard look like?

| Evaluation Criterion | Max Score | Weight | Scoring Scale | Observable Behavior |

| Greeting and Introduction | 10 | 10% | 0 / 10 | Agent states name, company name, and offers assistance within 10 seconds of call start. |

| Active Listening and Empathy | 20 | 20% | 0 / 10 / 20 | Agent acknowledges customer emotion, paraphrases the issue back, and avoids interrupting. |

| Compliance and Disclosures | 20 | 20% | 0 / 20 (binary) | All required legal disclosures are delivered verbatim and in the correct order (zero tolerance). |

| Problem Resolution Accuracy | 20 | 20% | 0 / 15 / 20 | Agent provides the correct information, follows correct process, and resolves the issue in the first contact where possible. |

| Communication Clarity | 10 | 10% | 0 / 10 | Agent avoids jargon, speaks at an appropriate pace, and confirms understanding before ending. |

| Call Handling Procedure | 10 | 10% | 0 / 10 | Agent follows correct hold/transfer protocol, states reason before placing on hold, and returns within promised timeframe. |

| Closing and Next Steps | 5 | 5% | 0 / 3 / 5 | Agent summarizes resolution, confirms customer satisfaction, and provides reference number or follow-up timeline. |

| Overall Customer Experience | 5 | 5% | 0 / 5 | Holistic impression: would this call represent the brand well to a first-time customer? |

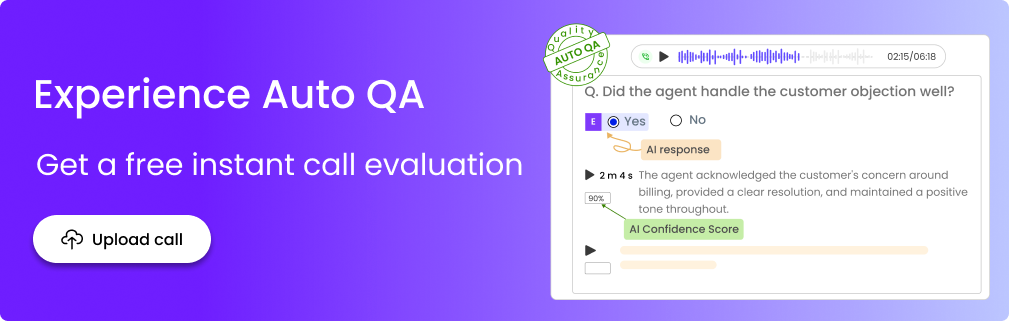

E. How is AI changing call center quality assurance in 2026?

Traditional call center QA programs review 1 to 3% of calls manually. That means 97 to 99% of customer interactions are never scored, never coached on, and never improved.

AI-powered quality assurance changes this equation entirely.

According to McKinsey, automated QA systems achieve accuracy levels above 90% – compared to 70 to 80% for manual reviewers – while simultaneously reducing QA operational costs by more than 50%. More importantly, AI QA platforms can score 100% of calls in real time, flagging compliance risks, detecting sentiment shifts, and surfacing coaching opportunities that would be invisible in a 2% manual sample.

Despite this, CMS Wire reported that only 25% of call centers have successfully integrated AI QA into their daily operations. That adoption gap represents a significant competitive advantage for organizations that act now.

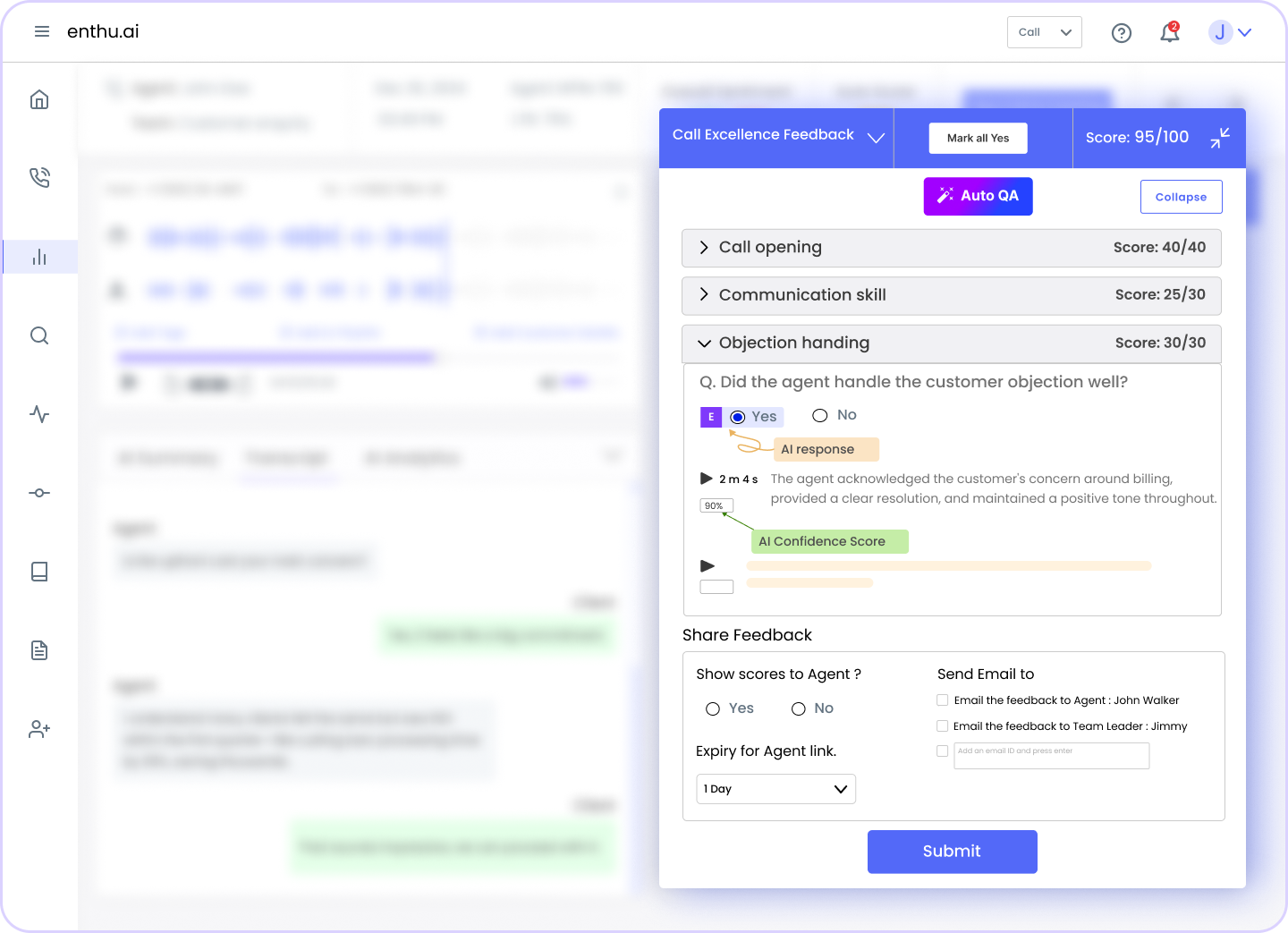

Enthu.AI’s platform is built specifically for this transition: providing automated scoring, calibration tools, and agent coaching workflows that work on 100% of interactions, not a manual sample.

F. 11 best practices for call center quality assurance in 2026

Modern QA demands a strategic approach that combines proven methodologies with innovative technology. Here’s how to build a world-class quality assurance program that drives measurable results.

1. Establish clear, measurable QA standards

Stop the guessing game. Write down exactly what “great service” looks like at your company.

Document everything, from greetings to tone, problem-solving approaches, and closings.

Create simple scorecards with 3-4 sections covering:

- Process adherence: Did they follow the right steps?

- Product knowledge: Did they know their stuff?

- Empathy & tone: Were they genuinely helpful?

- Issue resolution: Did they solve the problem?

Use number scales (0-5) for soft skills and yes/no checkboxes for must-do compliance items. Clear standards eliminate confusion and help agents succeed.

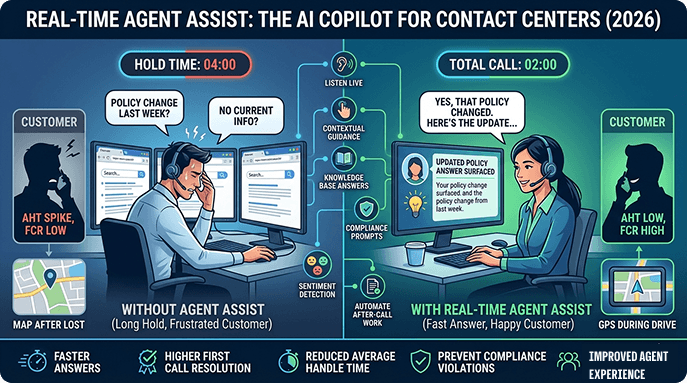

2. Implement AI-powered auto-scoring

Listening to every single call manually? That’s impossible.

AI-powered auto-scoring analyzes 100% of your calls with 90%+ accuracy. The technology identifies customer mood, checks script compliance, and flags potential issues automatically.

It works in 20+ languages and can reduce your average handle time. Your supervisors stop drowning in call reviews and start actually coaching.

AI spots patterns humans miss while cutting evaluation time dramatically. Keep humans involved for complex situations, but let technology handle the routine work.

Enthu pro tip: Utilize automated QA software for call centers that evaluate 100% of conversations, freeing your QA team to focus on strategic coaching rather than manual call reviews.

3. Use statistically significant sample sizes

Checking five calls per month per agent tells you almost nothing. You need real data, not random snapshots. Aim to evaluate at least 10% of interactions – use AI to make this realistic.

Key requirements:

- Spread reviews throughout the month (not just Monday mornings)

- Include different customer types and complaint calls

- Cover peak hours and slow periods

- Collect minimum 5 customer surveys per agent monthly

Bigger sample sizes mean reliable insights you can actually use to improve performance. Small samples lead to bad decisions and unfair evaluations.

4. Conduct regular calibration sessions

Ever notice how different supervisors score the same call completely differently? That’s a huge problem.

Weekly or biweekly calibration sessions fix this.

Have your evaluators score identical calls independently, then compare notes. Where did scores differ? Why? Talk it through until everyone’s aligned.

Send calls to reviewers a couple days early so meetings focus on discussion, not listening. Document every decision in a shared file everyone can reference. Invite agents to occasional sessions for their input.

5. Deliver real-time feedback and coaching

Real-time coaching transforms performance instantly. Modern agent coaching tools can alert supervisors during live calls when something’s going wrong – low satisfaction signals, missed compliance steps, rising customer frustration.

Agents can course-correct immediately instead of repeating mistakes for weeks. The AI suggests next-best actions based on what’s happening right now in the conversation.

This immediate feedback loop cuts handle time and boosts outcomes dramatically. Contact centers coaching on four or more calls see the strongest results. Make coaching conversations, not lectures.

Enthu.ai Tip: Use an AI tool that provides real-time agent coaching with instant alerts when conversations need supervisor intervention, enabling immediate performance correction.

6. Capture and analyze every interaction

You can’t improve what you don’t measure. Recording every interaction creates a complete record for protection and learning.

Why 100% recording matters:

- Dispute resolution: Proof when customers dispute what was said

- Compliance coverage: Complete audit trails for regulatory requirements

- Pattern recognition: Spot trends across hundreds of conversations

- Training material: Real examples for coaching sessions

Use platforms that automatically organize, transcribe, and tag interactions so they’re searchable later. This gives you the data foundation for coaching, training, and strategic decisions.

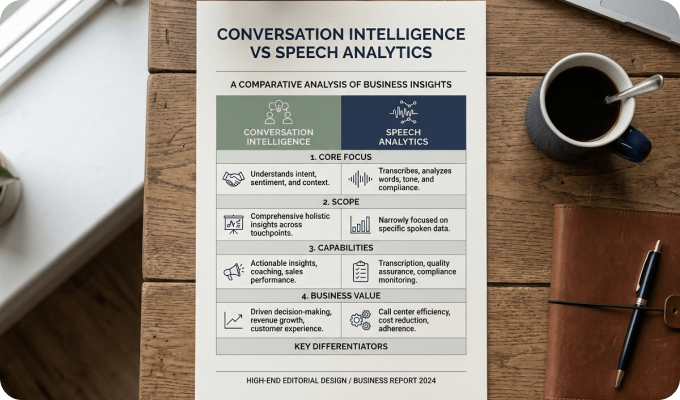

7. Leverage speech and text analytics

Your agents have thousands of conversations monthly – what are customers really saying?

Speech analytics uncovers hidden gold.

The technology automatically identifies trending complaints, emotional triggers, and phrases that lead to great outcomes. It flags compliance risks before they become expensive problems.

Sentiment analysis tracks when conversations turn negative so you can train agents on those exact moments.

Studies show speech analytics can boost productivity by 40%. The AI predicts which customers might churn based on conversation patterns, enabling proactive saves.

Enthu.ai Tip: Leverage sentiment analysis and speech analytics tools that help you automatically surface coaching opportunities and compliance risks across 100% of your interactions.

8. Create channel-specific scorecards

Judging an email by phone call standards makes zero sense. Each channel has unique quality requirements.

Channel-specific focus areas:

- Phone calls: Tone, pace, verbal empathy, active listening

- Emails: Grammar, clarity, completeness, structure

- Chat: Response speed, multitasking, efficient canned responses

- Social media: Public professionalism, brand voice, crisis management

Create separate evaluation forms for each channel reflecting what actually matters there. Weight the scoring differently too – empathy might be heavily weighted on phone calls but less critical in password reset emails.

9. Incorporate customer feedback

Your internal scores only tell half the story, customers have the other half.

Send brief surveys immediately after interactions while experiences are fresh. Keep them short—2-3 questions max covering satisfaction, resolution, and overall experience. Connect survey results directly to agent records in your CRM.

When customers rate service poorly, trigger immediate follow-up workflows.

Compare what your QA team scores versus what customers report. Use customer feedback to refine your scorecards and training priorities.

10. Engage agents in the QA process

QA programs fail when agents feel monitored instead of supported. Flip the script – involve them from day one.

How to engage agents effectively:

- Let them help design scorecards (builds understanding and buy-in)

- Encourage self-evaluations before supervisor reviews

- Allow formal dispute processes for fairness

- Implement peer reviews between experienced agents

- Share trends and insights openly, not secretively

Peer feedback often lands better than management critique.

11. Link QA to continuous training

QA scores sitting in spreadsheets help nobody. Transform findings into targeted coaching and training. Build personalized development plans addressing each agent’s specific gaps.

When five agents struggle with the same objection, that’s a team training opportunity. Create short microlearning modules tackling single skills, these work better than marathon training sessions. Coach frequently on multiple calls, not once quarterly.

Track whether coached behaviors actually improve in later evaluations. This closed loop proves training ROI. Gamify improvements to make development motivating instead of punitive.

Enthu.ai Tip: Our analytics dashboard automatically identifies coaching opportunities and tracks improvement trends, making it easy to measure training effectiveness and ROI.

G. Achieve efficient call center quality assurance with Enthu.AI

Traditional QA methods can’t keep pace with modern contact center demands. You need technology that evaluates every conversation, not just 1-2% of interactions.

By taking advantage of smart technology, you can optimize your QA processes, making life easier for agents and boosting satisfaction scores and customer retention. Enthu.ai is a holistic contact center solution with call center quality monitoring software features built right in:

- Automatic call recording

- AI-powered call monitoring

- Intelligent call scoring

- Custom scorecards

- Detailed dashboards

- Speech analytics

- AI-generated call summaries

Ready to transform your QA program?

Request for a FREE demo today!

H. Frequently Asked Questions About Call Center Quality Assurance

1. What is the difference between call center quality assurance and quality monitoring? Quality monitoring is the act of listening to or reviewing calls. Quality assurance is the broader program that includes conversation monitoring, scoring, feedback, agent coaching, and continuous improvement. QA is the larger system; monitoring is one input into it. 2. How many calls should a QA analyst review per day? Manual QA analysts typically review 5 to 10 calls per day at 10 – 15 minutes per call evaluation. However, AI-powered QA platforms can score 100% of calls automatically, making the manual sampling question increasingly obsolete. Best-in-class programs use AI for full coverage and use human analysts for calibration and escalation reviews. 3. What is a good quality score for a call center? Based on our experience of Enthu.AI across 100+ customers, we have observed a score of 88%+ on a 100-point weighted QA scorecard indicates satisfactory performance. We have observed this across a data set of 3.8 million calls across a one month period. Scores below 70 should raise flags, resulting in supervisor/TL intervention, mostly resulting in one-on-one coaching. Best-in-class centers maintain average team scores above 92%. 4. How often should call center QA calibration sessions be held? Monthly calibration sessions are standard practice in most contact centers. In calibration, 2 -3 QA analysts independently score the same call, then compare results to identify scoring gaps. Without regular calibration, inter-rater reliability degrades and QA scores reflect reviewer preferences rather than agent performance.

On this page

On this page