Summary

Speech analytics for call centers uses AI to automatically analyze every customer call — transcribing speech, detecting emotion, flagging compliance issues, and surfacing coaching opportunities. Contact centers using speech analytics report 20–30% cost reductions and 10%+ CSAT improvements (McKinsey). This guide covers how it works, 5 proven use cases, a step-by-step implementation plan, ROI formula, and how to evaluate software.

Your call center generates thousands of conversations every week. Speech analytics is the technology that turns those conversations from noise into competitive intelligence. In this guide: exactly how it works, what it costs, 5 proven use cases, and how to implement it in under 30 days.

Manual QA can review maybe 2–3% of calls. The other 97–98%? They disappear into a black box. An angry customer leaves. A rep skips a legal disclaimer. A golden upsell moment vanishes. And nobody finds out until it’s too late.

That’s the problem speech analytics solves at scale, in real time, across 100% of your calls.

Table of Contents

1. What is speech analytics in a call center?

Speech analytics is the process of converting customer calls into searchable, analyzable data using artificial intelligence. It captures every interaction between agents and customers, transcribes the conversation in real time, and uses AI to spot patterns good, bad, and risky.

A 24/7 contact center handling 200 calls per day, with each call averaging six minutes, generates over 160,000 spoken words daily. That’s more than a million words per week. No QA manager or team lead could realistically sift through that volume manually. AI-powered speech analytics processes it all instantly and at scale.

Data point: Contact centers using speech analytics have reported 20–30% cost savings, 10%+ CSAT score improvements, and measurably stronger sales conversion rates. Source: McKinsey & Company, ‘From speech to insights: the value of the human voice.‘

Here is what speech analytics actively monitors on every call:

- Keyword and phrase detection: Track any term ‘cancel,’ ‘competitor name,’ ‘not happy,’ ‘late fee’ across 100% of calls automatically.

- Emotion and tone monitoring: Detects frustration, confusion, or satisfaction even when the customer says ‘I’m fine.’ Tone shifts in the middle of a call often signal an escalation about to happen.

- Compliance and script adherence: Flags instantly when a required disclaimer, security check, or legal statement is missed before a complaint is filed.

- Silence and talk ratios: Long pauses, agent over-talking, or one-sided conversations often signal confusion or disengagement.

- Call categorization: Automatically tags every call by topic (billing, technical support, cancellation, complaint) so managers can see volume trends at a glance.

2. Speech analytics vs voice analytics vs conversation intelligence: what’s the difference?

These three terms are often used interchangeably, but they describe different scopes of technology. Choosing the wrong one for your use case costs time and budget.

Speech analytics

Focuses on analyzing the words spoken and the acoustic patterns in voice calls. Its core strength is keyword detection, compliance monitoring, and phrase-level pattern recognition. Most commonly used by QA managers and compliance teams in regulated industries.

Voice analytics

A subset of speech analytics that specifically analyzes acoustic properties, tone of voice, pitch, speaking pace, and emotional state. Think of it as reading how something is said rather than what is said. Strong for detecting agent burnout, customer frustration, and de-escalation effectiveness.

Conversation intelligence

The broadest category. Conversation intelligence platforms analyze the full interaction voice, text, context, CRM data, and outcomes to generate coaching recommendations, revenue insights, and customer experience strategy. Enthu.AI is a conversation intelligence platform that includes speech analytics as a core component.

Comparison: speech analytics vs voice analytics vs conversation intelligence

| Feature | Speech analytics | Voice analytics | Conversation intelligence |

| What it analyzes | Audio patterns, keywords, emotion | Acoustic data: tone, pitch, pace | Full conversation: voice + text + context |

| Primary use | QA & compliance monitoring | Emotion & agent performance | Coaching, CX strategy, revenue |

| Real-time capability | Sometimes | Yes | Yes (advanced platforms) |

| Transcription included | Yes | Partial | Yes, full |

| Who uses it | QA managers, compliance teams | Operations managers | QA, sales, CX, training teams |

| Best for | Regulated industries (finance, insurance, health) | Large outbound sales teams | End-to-end contact center intelligence |

For most call centers: If your primary goal is compliance monitoring and QA scoring, speech analytics is sufficient. If you want to improve agent performance, coaching outcomes, and revenue metrics, you need a full conversation intelligence platform like Enthu.AI.

3. How does speech analytics work? the 3-step technology breakdown

Behind every speech analytics insight is a pipeline of AI technologies working in sequence. Understanding the flow helps you evaluate vendors, set accuracy expectations, and explain ROI to your leadership team.

Step 1: Call capture and transcription (NLP layer)

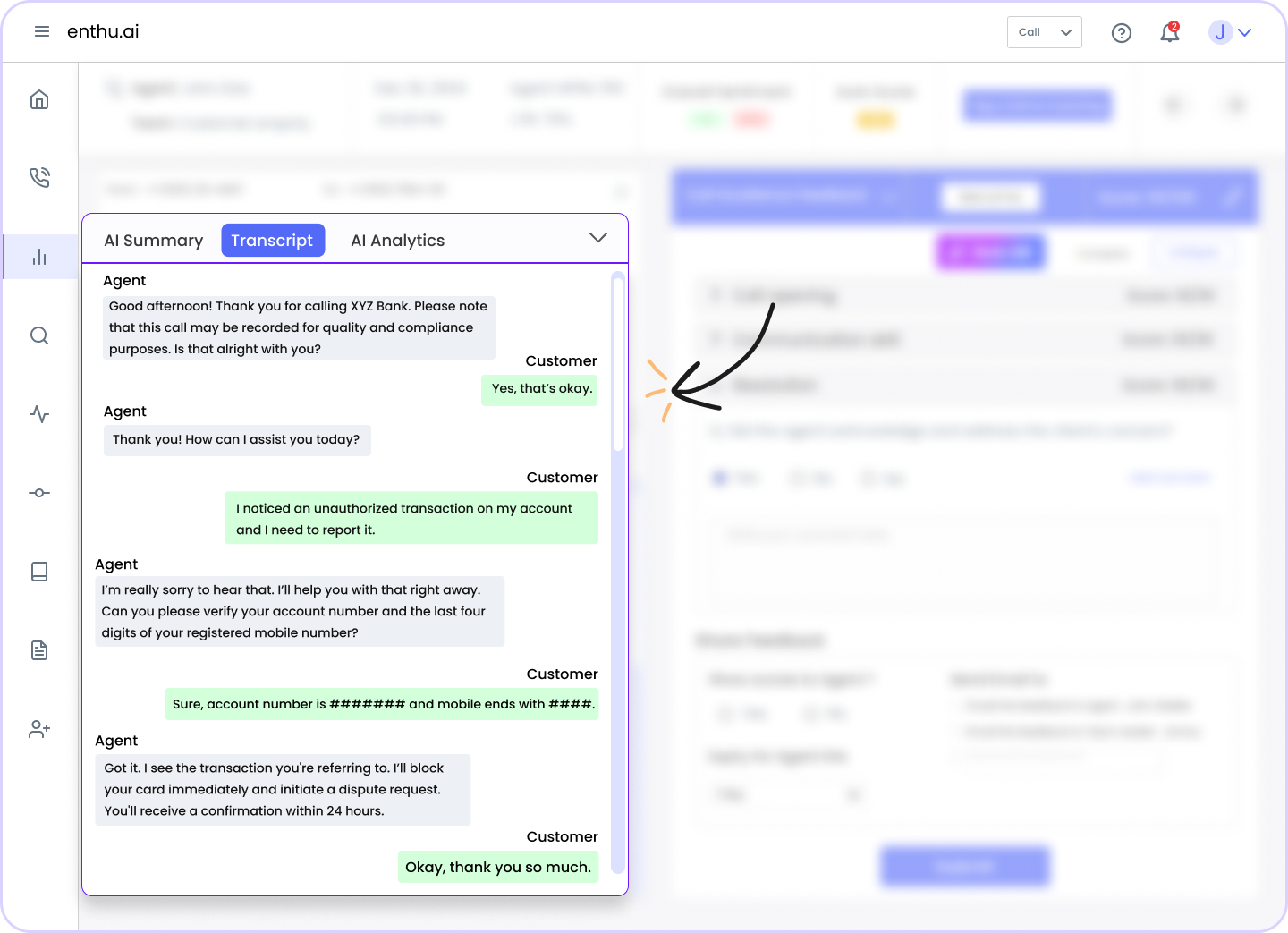

Every call, inbound or outbound, is captured by the system, either live or from your existing call recording infrastructure. The audio is then processed by a Natural Language Processing (NLP) engine that converts speech to text in real time.

Modern speech-to-text engines achieve 95%+ accuracy on standard English. Accuracy drops on heavy accents, industry jargon, or poor audio quality which is why evaluating transcription accuracy on your own call sample before purchasing is critical.

Technical note: The best NLP engines use speaker diarization the ability to separate Agent and Customer audio streams independently. This is essential for silence analysis, talk-ratio measurement, and compliance monitoring (you need to know WHO said the disclaimer, not just that it was said).

Step 2: AI analysis and pattern recognition (ML layer)

Once the call is transcribed, machine learning models analyze the text and audio together for patterns. This is where the real intelligence lives:

- Keyword and phrase tagging: Pre-defined libraries of terms (customizable) are flagged and time-stamped to the exact call moment.

- Sentiment scoring: Sentence-by-sentence emotional valence is calculated as positive, neutral, or negative, with escalation alerts when sentiment sharply declines mid-call.

- Behavioral flags: Interruptions, silence zones, script deviations, and energy level drops are identified automatically.

- Topic classification: Calls are categorized by primary topic and sub-topic, enabling trend analysis without manual tagging.

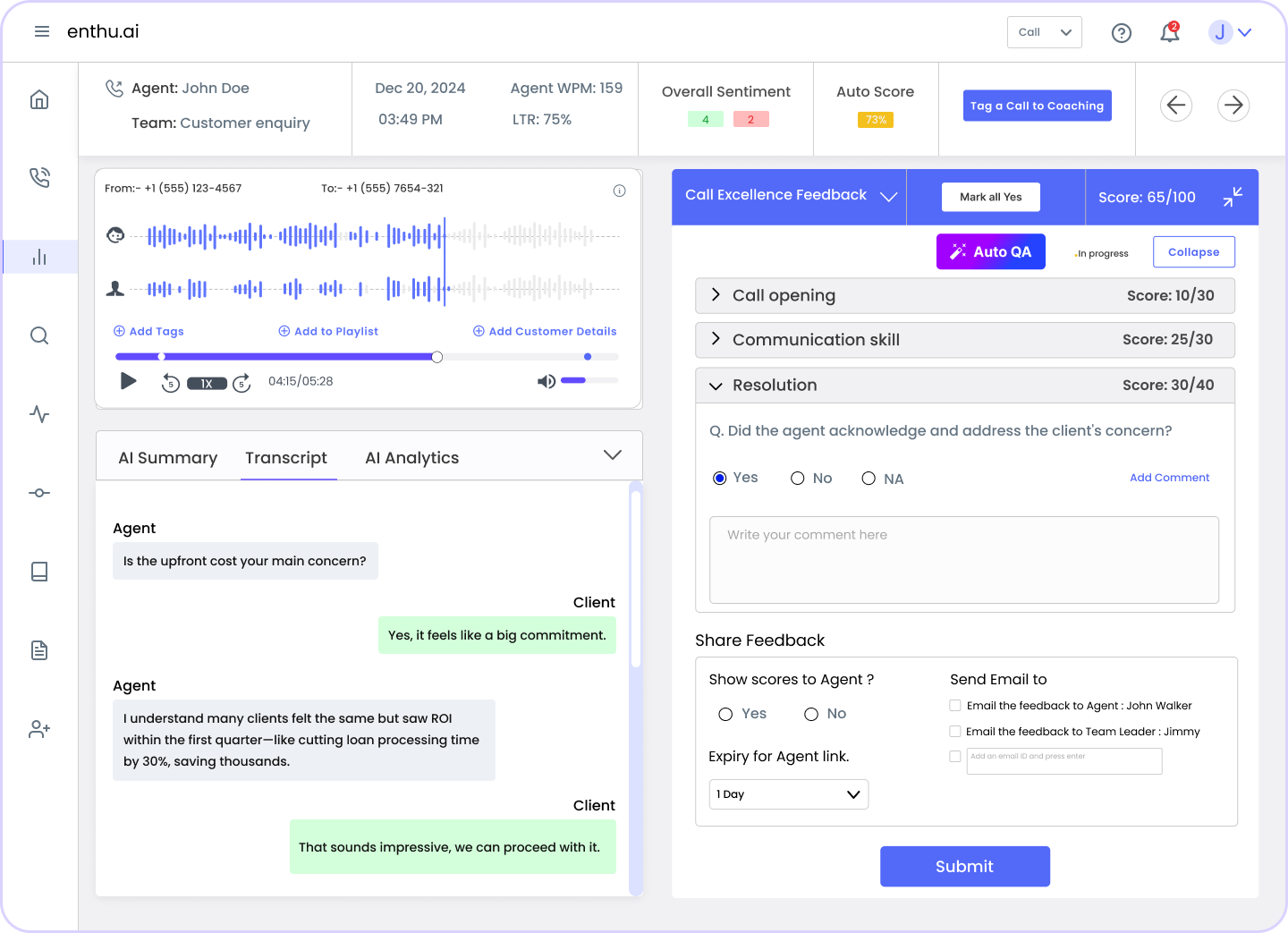

Step 3: Insight generation and action delivery (reporting layer)

Raw analysis becomes actionable insight through dashboards and automated workflows. Supervisors get agent-level scorecards. QA managers get compliance reports. Executives get CSAT trend data. And agents get targeted coaching feedback all from the same system.

Because analysis happens in real time (or within minutes for post-call), teams don’t wait for weekly reviews. Issues surface the same day they occur.

4. Real-time vs post-call speech analytics: which does your contact center need?

This is one of the most common questions from call center leaders and one that no competitor adequately answers. The choice directly affects your infrastructure requirements, your use cases, and your ROI timeline.

| Dimension | Real-time speech analytics | Post-call speech analytics |

| When it runs | During the live call | After the call ends |

| Primary use | Agent guidance, live compliance alerts, de-escalation cues | QA scoring, trend analysis, and coaching library |

| Who benefits | Agents on the call, supervisors monitoring live | QA teams, managers, trainers |

| Best for | Compliance-heavy industries (financial, insurance, healthcare) | Coaching programs, CSAT improvement, and root cause analysis |

| Infrastructure req. | Higher needs low-latency pipeline | Lower batch processing works |

| Enthu.AI support | Yes (live transcription + scoring) | Yes (full suite) |

When to prioritize real-time analytics

Real-time speech analytics is the right choice when the cost of an in-call error is high, a missed compliance disclosure, a mishandled escalation, or a lost upsell moment. Common real-time use cases include:

- Live compliance alerts (financial services, insurance, healthcare)

- Agent prompts for objection handling or upsell cues

- Supervisor whisper coaching during escalating calls

- Immediate de-escalation flags for abusive or at-risk calls

When post-call analytics is sufficient

For most QA programs, coaching workflows, and trend analysis, post-call speech analytics delivers all the insight you need at lower infrastructure cost:

- Automated QA scoring of 100% of calls

- Coaching session prep (identify top teaching moments per agent)

- Root cause analysis for CSAT dips or churn spikes

- Competitive intelligence (what are customers mentioning about competitors?)

Best practice: Start with post-call analytics for your QA and coaching program. Once you have baseline performance data (typically 60–90 days), layer in real-time analytics for your highest-stakes call types first (e.g., compliance-sensitive calls, inbound escalations).

5. Five proven use cases with real outcomes

Use case 1: Automated QA cover 100% of calls, not 2%

Traditional QA randomly samples 2–5 calls per agent per month. That means 95–98% of calls are never reviewed. For a 50-agent team handling 2,000 daily calls, that’s 1,900+ interactions flying under the radar every single day.

Speech analytics enables automated QA scoring on 100% of calls against your defined scorecard. Agents are evaluated consistently. Blind spots disappear. And because scoring is automated, your QA team can shift from data collection to coaching conversations.

Real outcome: A UK-based financial services contact center using Enthu.AI increased QA call coverage from 3% to 100% within the first 30 days of deployment without adding a single QA headcount. Compliance violations detected in month one were 4× higher than their previous manual sampling had shown.

Use case 2: Compliance and script adherence monitoring

In regulated industries, such as banking, insurance, healthcare, and utilities, missing a required disclosure isn’t a coaching issue. It’s a legal liability. Yet manual QA can’t catch what it doesn’t hear.

Speech analytics monitors every call for required disclosures, mandatory phrases, and prohibited language. Violations are flagged in real time (or within minutes post-call), enabling remediation before a complaint is filed or an audit is triggered.

- Financial services: APR disclosures, recording consent, identity verification

- Insurance: policy limitation disclosures, claims process explanation

- Healthcare: HIPAA authorization language, patient rights statements

- Utilities: tariff change disclosures, cancellation rights

Use case 3: Agent coaching and performance improvement

The most effective coaching is specific, timely, and evidence-based. Speech analytics gives every team leader exactly that: the specific call moment, the exact transcript, and the pattern across multiple interactions.

Instead of generic feedback (‘work on empathy’), coaches can play back the 47-second segment where the agent’s tone shifted, show the customer’s escalating sentiment score, and role-play the alternative response. Coaching becomes a five-minute targeted conversation, not a 30-minute generic session.

Benchmark: Based on analysis of 500,000+ calls processed through Enthu.AI, the top 3 coaching triggers agent interruptions, long silence zones (8+ seconds), and missed empathy phrases account for 67% of all low-scoring QA interactions.

Use case 4: Escalation and churn risk detection

Sentiment analysis tracks emotional state throughout a call. When a customer’s sentiment drops sharply, particularly in the last 25% of the call it’s a strong predictor of churn or a callback. Speech analytics can flag these calls for same-day manager follow-up.

Common churn-risk language patterns to monitor:

- ‘I’ve called three times about this.’

- ‘If this isn’t resolved today, I’m leaving.’

- ‘I already spoke to someone, and nothing changed.’

- ‘Can I speak to a manager?’ was especially repeated twice in one call

Use case 5: Root cause analysis for CSAT drops

When CSAT dips, the instinct is to blame agents. Speech analytics tells you whether it’s actually a product issue, a policy change, a process gap, or a training failure with data to back it up.

By correlating low CSAT scores with call topics, keywords, and sentiment patterns, you can answer: ‘Is our CSAT decline in week 3 caused by agents or by the new billing policy we rolled out?’ That question used to take weeks of manual analysis. Speech analytics answers it in minutes.

6. How to implement speech analytics in a call center? (step-by-step, 30-day plan)

Implementation of cloud-based speech analytics typically takes 2–4 weeks for standard contact center environments. Here’s the plan most successful deployments follow:

| Step | Action | Timeline | Owner |

| 1 | Define goals and KPIs (CSAT, AHT, compliance rate) | Week 1 | Operations / QA lead |

| 2 | Select and procurement your speech analytics platform | Week 1–2 | IT + QA |

| 3 | Integrate with phone system / CRM / help desk | Week 2–3 | IT |

| 4 | Configure keywords, scorecards, and compliance flags | Week 3 | QA team |

| 5 | Run a pilot with 10% of the call volume, and validate accuracy | Week 4 | QA + agents |

| 6 | Agent onboarding and change management session | Week 4 | Team leads |

| 7 | Full rollout track KPIs at 30/60/90 day intervals | Week 5+ | All |

The agent adoption challenge: how to get buy-in

The most common implementation failure isn’t technical, it’s cultural. Agents hear ‘speech analytics’ and immediately think: ‘Management is spying on me.’ Addressing this proactively is the single biggest factor in successful adoption.

What to communicate to agents before launch:

- Speech analytics evaluates every call the same way no favoritism, no inconsistency. It actually creates fairer reviews than random sampling.

- The system surfaces coaching opportunities, not just mistakes. It will help them improve, not just flag errors.

- Top performers benefit most because their good calls become coaching examples for the whole team.

- Scores are used for development, not punishment. Establish this clearly in your QA policy before launch.

Proven tactic: Share the first 10 calls the system flagged as high-performing with those agents specifically. When people see the system catching them doing things right, adoption resistance drops significantly.

7. Speech analytics ROI: what to expect and how to calculate it

ROI from speech analytics comes from four primary levers. Understanding each helps you build the business case for leadership approval.

The four ROI levers

- Reduced average handle time (AHT): Targeted coaching on specific talk behaviors (silence, over-talking, excessive holds) typically reduces AHT by 10–20% within 90 days.

- Improved first-call resolution (FCR): Root cause analysis reveals the exact conversation moments where agents fail to resolve issues, fixing these directly improves FCR and reduces repeat calls.

- Compliance cost avoidance: A single regulatory fine in financial services or healthcare can run $50,000–$500,000+. Speech analytics catches compliance violations before they become legal events.

- QA staffing efficiency: Automating QA scoring for 100% of calls eliminates 30–50% of manual QA hours, freeing those resources for coaching and training.

ROI calculation worksheet

Use this formula to estimate your monthly savings from AHT reduction alone:

Monthly savings = (Agents × Daily calls × Avg duration × 22 working days × AHT reduction % × Hourly cost) ÷ 60

| Variable | Your number | Example (50 agents) |

| Number of agents | ____ | 50 |

| Avg calls per agent per day | ____ | 40 |

| Avg call duration (mins) | ____ | 6 min |

| Agent hourly cost (fully loaded) | ____ | $22/hr |

| AHT reduction from coaching (industry avg) | ____ | 15% |

| Monthly savings (calculated) | ~$6,600/month |

The 50-agent example above shows $6,600/month in savings from AHT reduction alone before accounting for compliance savings, FCR improvement, or QA efficiency gains. Most contact centers see full payback within 2–3 months.

8. Compliance and data privacy: GDPR, PCI DSS, and HIPAA considerations

For enterprise buyers in the UK, EU, UAE, and the US, compliance isn’t a nice-to-have, it’s a pre-condition for sign-off. Here’s what you need to know before deploying speech analytics in a regulated environment.

GDPR (EU and UK)

- Call recording and speech analytics processing requires a lawful basis under GDPR, typically ‘legitimate interest’ or ‘consent.’

- Agents and customers must be informed about call recording and AI processing at the start of the call

- Data retention policies must be defined: most contact centers set 90–180 days for speech analytics data

- Data subject access requests (DSARs) must be fulfillable; your vendor must support data export or deletion by individual call

- Look for: GDPR-compliant data processing agreements (DPAs) from your vendor, EU/UK data residency options

PCI DSS (Payment card data)

- Speech analytics systems must either pause recording during card number entry OR redact card data from transcripts automatically

- Most enterprise platforms support ‘pause-resume’ recording or DTMF masking for payment segments

- Verify your vendor is PCI DSS compliant, not just your phone system

HIPAA (US healthcare)

- Speech analytics involving patient health information (PHI) requires a Business Associate Agreement (BAA) with your vendor

- PHI mentioned in calls must be encrypted in transit and at rest

- Access controls must limit who can query or review healthcare calls

Key question for vendors: Ask every speech analytics vendor: ‘Do you offer a signed BAA / DPA / GDPR data processing agreement as standard?’ Reputable vendors answer yes immediately. Any hesitation is a red flag.

9. How to choose speech analytics software? 7 evaluation criteria

Not all speech analytics platforms are equal. The market ranges from single-feature call recording add-ons to full conversation intelligence suites. Here’s the framework for evaluating objectively:

| Criterion | What to look for | Red flag |

| Transcription accuracy | 95%+ accuracy on accented English, industry jargon | No published accuracy benchmarks |

| Real-time capability | Sub-2-second latency for live guidance | Post-call only, no live option |

| CRM / phone integrations | Native connectors for Salesforce, HubSpot, Zendesk, major VoIP | Manual file upload only |

| Compliance certifications | SOC 2 Type II, ISO 27001, GDPR-ready, HIPAA if healthcare | No certifications listed |

| Reporting depth | Agent-level, team-level, trend dashboards, exportable data | Only aggregate dashboards |

| Ease of use | QA managers can configure scorecards without IT | Requires developer support for every change |

| Pricing model | Per agent per month predictable at scale | Per minute of audio costs explode at volume |

Questions to ask every vendor in your demo

- Show me your transcription accuracy on a sample of our actual calls, not your benchmark calls.

- How long does a standard integration with [our phone system] take?

- Can we customize QA scorecards without developer support?

- What is your data residency policy, and where are calls stored?

- Show me an agent-level coaching report. What does a QA manager actually see?

- What does your onboarding and implementation support include?

Enthu.AI is a conversation intelligence platform built specifically for contact centers. It supports 100% call coverage, GenAI-powered auto QA, real-time transcription, sentiment analysis, agent coaching workflows, and integrates with 50+ phone systems and CRMs. Most customers live within 2 weeks.

10. Speech analytics best practices (from 500K+ call deployments)

Based on deploying speech analytics across 200+ contact center programs, these are the practices that consistently separate high-performing deployments from ones that stall after 90 days:

1. Start with your highest-pain QA category

Don’t try to monitor everything at once. Identify your biggest QA problem, compliance failures, escalation rates, FCR, and build your first keyword library and scorecard around that. Early wins in a focused area drive adoption and executive support.

2. Calibrate your keyword library every 30 days

Markets change, products change, and call topics shift. A keyword library configured in month one will lose relevance by month three without updates. Schedule a monthly 30-minute calibration session with your QA lead to add new terms, remove false positives, and adjust sentiment triggers.

3. Build a ‘coaching moments’ playlist, not just a violations list

The most effective use of speech analytics for agent development is surfacing great calls, not just bad ones. Tag high-scoring interactions and build coaching playlists that show the team what ‘good’ sounds like. This turns speech analytics from a surveillance tool into a development tool and kills adoption resistance.

4. Connect speech analytics to your CSAT data

Speech analytics without outcome correlation is just monitoring. Connect your CSAT scores to call records and let the system surface what separates 4-star from 1-star interactions. This is where the real insight lives and it’s a feature that most teams never configure.

5. Report at the team level publicly, agent level privately

Share team-level speech analytics trends in team meetings (improving, declining, benchmark comparison). Keep individual agent scorecards in 1:1 coaching conversations. This structure drives team accountability without creating the surveillance anxiety that kills morale and adoption.

FAQs

1. What is speech analytics?

Speech analytics in call centers helps enhance customer service by monitoring agent performance, identifying compliance issues, and uncovering customer insights, enabling data-driven decisions to improve operations and customer satisfaction.

2. What are the metrics of speech analytics?

Sentiment analysis, silence/overtalk rate, keyword frequency, conversation duration, First conversation Resolution (FCR), customer satisfaction (CSAT), and compliance adherence are some of the speech analytics measures. These metrics help evaluate call quality, customer experience, and agent performance.

3. What is an example of speech analytics?

Sentiment analysis of customer care call recordings is one use of speech analytics that detects emotions. Companies can measure customer satisfaction and optimize agent responses to improve service quality by identifying phrases like “frustrated” or changes in tone.

On this page

On this page